CodeSubmit Library

Python Coding Assignments on CodeSubmit

Looking for a better way to hire Python developers? CodeSubmit is the best coding challenge platform for assessing Python devs! Our carefully curated library of Python coding assessments helps you to identify top candidates.

Uncover candidate competencies and hire your next Python developer with confidence.

Trusted by engineering teams worldwide

Identify Top Python Candidates

Evaluate for on-the-job skills

Evaluate Python developers with Python-specific coding assessments. We support the most popular Python frameworks so that you can be confident that your next hire is proficient in your stack.

Choose from our library of Python coding challenges or upload your own. We also have Python assignments specifically for data engineers! Quickly and accurately identify qualified candidates and hire the right Python developer for your team.

Better hiring decisions

Since you're qualifying candidates based on their real Python language (and framework) skills, you can rest easy knowing you're making an informed hiring decision.

Our assignments are challenging but fun, so your future candidates will appreciate your great hiring process. And for your follow-up interviews, we've collected a list of great Python interview questions!

How it works

Create an account with CodeSubmit, select one of our excellent Python coding assessments, or upload your own, and get started inviting candidates. Our suite of review features makes identifying top performers simple.

Reduce hiring time, and snag the best Python developer for your growing team.

Git Tree Review Flow

How CodeSubmit turns a repo into a review map

CodeSubmit does not jump from a Python take-home straight to a thumbs-up or thumbs-down. The review flow starts by mapping the full git tree, then filtering obvious generated and vendor noise so reviewers get a fair file map before deeper review begins.

File listings alone do not decide anything. The tree is the map, then reviewers read the README, manifests, and top-modified files that explain how the submission works before they turn it into a candidate-friendly take-home review and a sharper CodePair follow-up.

File listings are discovery, not evidence. Generated and vendor noise gets filtered so the review starts from candidate-authored work.

The result is a cleaner handoff for hiring teams: concrete paths to inspect, stronger AI summaries, and live follow-up topics that stay anchored to the repo.

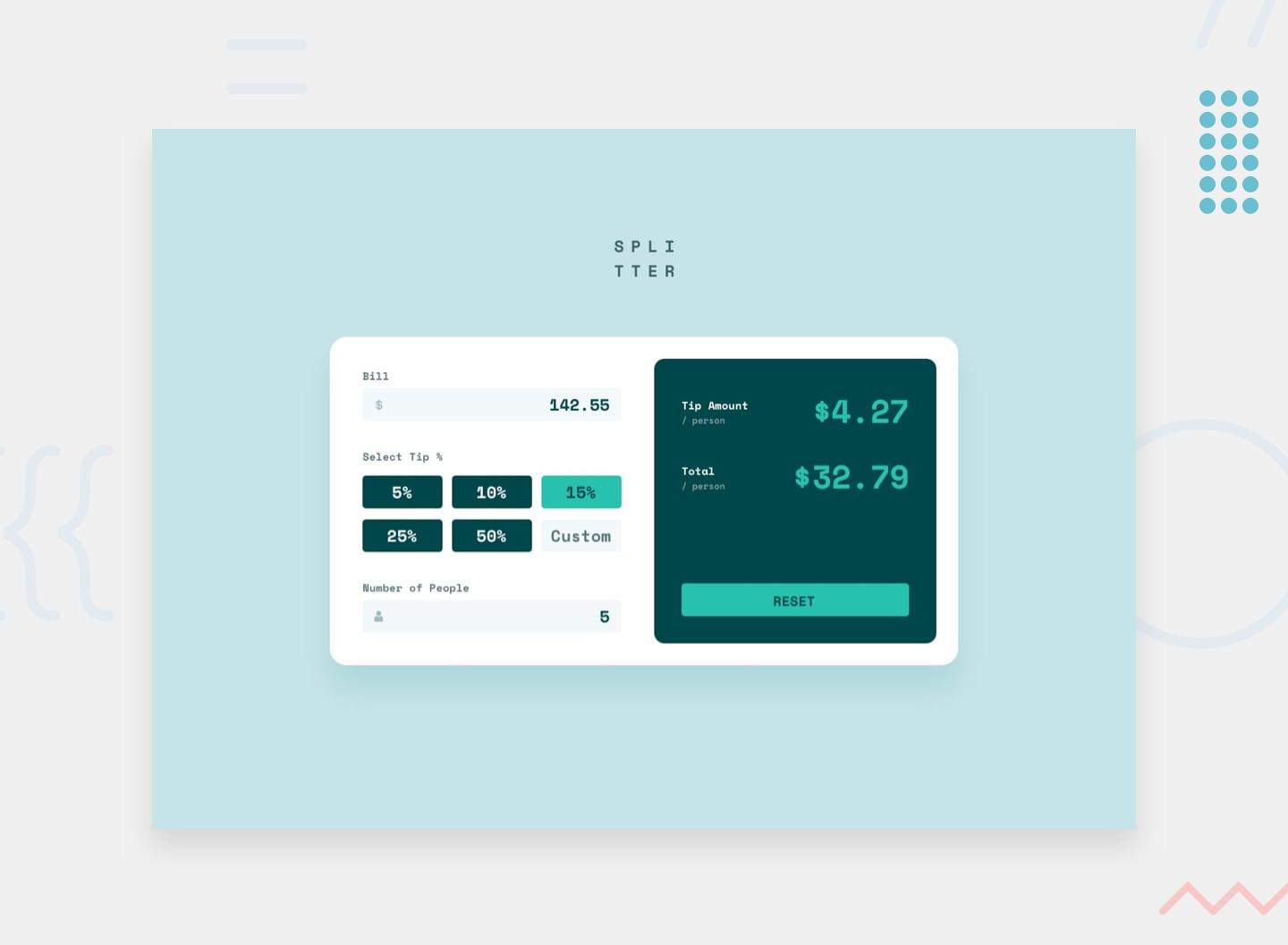

Real-World Take-Home

Use Python take-homes to expose service judgment

Python work rarely comes in one neat shape. One role leans on Django or FastAPI endpoints, another on data shaping, background jobs, or internal automation. A strong take-home should feel like a small slice of that real work, not a syntax drill detached from the job.

Keep the scope humane, keep the repo reviewable, and make the task close enough to the stack that your team can learn something real from how a candidate structures validation, tests, and service boundaries. That matters whether you are hiring for Flask, API-heavy backend work, or more data-oriented Python roles such as data engineers.

Good Python tasks show whether a candidate can keep validation, data shaping, background work, and tests understandable under real team pressure.

AI Review

AI makes Python review easier to triage

Once the repo is back, AI should help your team triage what matters quickly: validation, data shaping, side effects, background work, tests, and whether the code still reads well when the happy path breaks. The point is not to hand final hiring judgment to a model.

The point is to shorten the distance between first read and a useful Python CodePair follow-up. Reviewers get a cleaner map of where the code is solid, where it gets noisy, and what to ask next in a Python interview instead of spending the first ten minutes reconstructing the repo.

The strongest Python submissions keep endpoints, business logic, and persistence readable enough that another engineer could extend them without first untangling the repo.

Python gets slippery when assumptions hide inside helpers. Look for candidates who validate input clearly, shape data deliberately, and name transformed values well.

Whether the task uses jobs, retries, or async work, good candidates keep side effects visible and the control flow understandable under failure.

Useful take-home work leaves clues behind: targeted tests, clear assumptions, and enough logging or structure that follow-up review starts from signal instead of guesswork.

CodePair Follow-Up

Follow up live on the choices that matter

Python interviews get better when the conversation starts from the candidate's own service or script. Open the code they already wrote and push on concrete choices: a validation edge case, a retry path, a missing test, a new field, or one noisy piece of orchestration.

That makes CodePair feel less like performance theater and more like real collaboration. You see how the candidate debugs, explains tradeoffs, and improves code that already has context.

Suggested Coverage

Fit the Python exercise to the actual role

Keep the exercise close to the actual job. Backend teams should lean on request handling, business logic boundaries, and test coverage. Data or automation roles should lean on shaping messy input, clear assumptions, and repeatable output. If the role will touch the stack directly, pair the task with the right framework and follow-up guide instead of flattening Python into one generic paragraph.

If you are calibrating the interview depth too, pair the take-home with the relevant support page, whether that is the Python interview guide or a role page for backend or data engineering.

Use this path when the role depends on request handling, validation, service boundaries, and code another backend engineer can safely extend.

Use this path when the job leans on data shaping, repeatable scripts, ETL-style thinking, or Python work that lives closer to pipelines than endpoints.

Use this path when you want the take-home to roll straight into a deeper live conversation about error handling, refactors, test gaps, and tradeoffs.

Complete Your Technical Assessment

Pair Take-Home Tests with Live Coding

Combine Python take-home challenges with live CodePair sessions. Watch candidates walk through their solution, ask follow-up questions, and see how they handle real-time problem solving.

Perfect for assessing both independent work quality and collaborative coding skills in a single hiring pipeline.

The communication between hiring managers, recruiters and candidates has been incredibly improved since we started using CodeSubmit. There is no 'back and forth' anymore and the technical assessment is running smoothly!

Authentic tasks, not algorithm puzzles.

Take-Home Coding Challenges

Our extensive library of practical coding challenges provides an accurate assessment of candidate programming abilities while delivering a respectful and engaging interview experience.